Artificial Intelligence (AI) presents both a monumental opportunity for advancement and a significant risk of amplifying existing societal inequities. This dual potential makes the careful examination of AI’s development and implementation essential. By understanding the sources of AI bias and employing strategies to mitigate these biases, we can guide AI towards a future that upholds equity and fairness for all.

The Roots of AI Bias

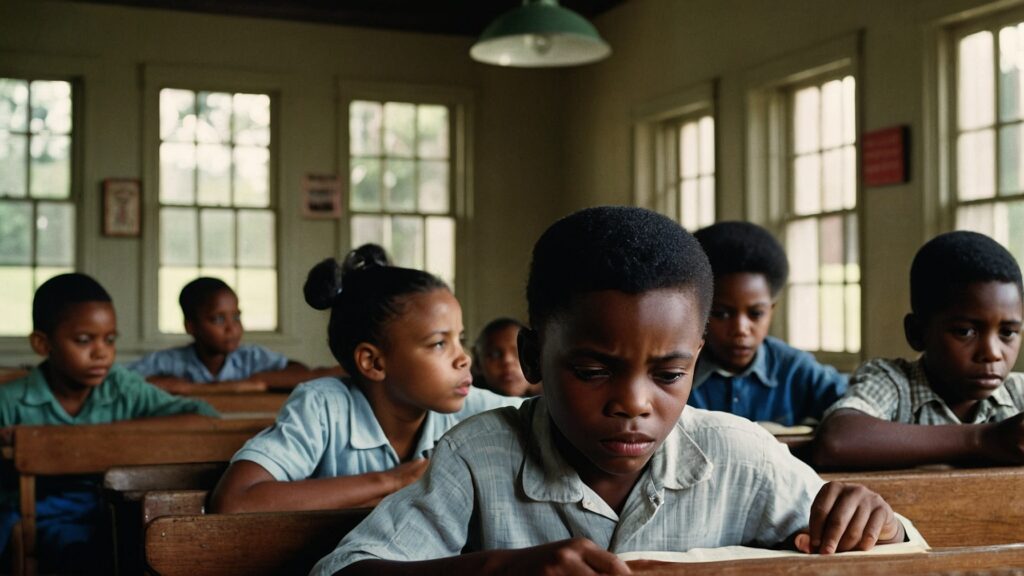

AI bias can manifest from the data it’s trained on, reflecting existing societal prejudices. For instance, when training datasets predominantly consist of certain demographics, AI models may perform inadequately for underrepresented groups (MIT News, 2021). Furthermore, biases in AI are not solely technical but are deeply entwined with human and systemic biases, where AI systems can inadvertently perpetuate discrimination embedded within societal institutions (NIST, 2022).

Strategies for Mitigating AI Bias

Promoting Diversity in AI Development

Ensuring diversity within AI development teams is crucial. Diverse teams bring varied perspectives, significantly reducing the risk of overlooking potential biases and thereby enhancing the inclusivity of AI technologies (NIST, 2022).

Developing Inclusive Data Sets

The diversity of training data is paramount to overcoming biases. Research demonstrates that neural networks have the potential to overcome dataset biases if the training data encompasses a broad spectrum of perspectives and representations. Achieving this diversity requires intentional effort in dataset design, focusing on inclusivity rather than sheer data volume (MIT News, 2021).

Embracing Socio-Technical Approaches

Addressing bias in AI necessitates a socio-technical approach that considers AI within its broader societal context. This approach calls for interdisciplinary efforts to understand and mitigate AI’s societal impacts, emphasizing that purely technical solutions may fall short in addressing the root causes of bias (NIST, 2022).

Defining and Measuring Fairness

The complexity of defining and measuring fairness in AI reflects the variety of societal norms and legal standards. While multiple definitions of fairness exist, they often reveal trade-offs between different notions of fairness and other objectives, such as accuracy. Understanding these trade-offs is crucial for developing fair AI systems (McKinsey & Company, 2021).

Technical Innovations and Human Oversight

Recent advancements in AI have introduced methods for enforcing fairness constraints on AI models. However, despite technical progress, human oversight remains indispensable. Human judgment is essential in ensuring AI-supported decisions align with societal values of fairness and equity (McKinsey & Company, 2021).

Conclusion

The advancement of AI offers significant benefits but also poses the risk of exacerbating systemic inequities. Through a comprehensive understanding of AI biases and the implementation of effective mitigation strategies, we can navigate AI development towards a more equitable future. This journey requires continuous effort, interdisciplinary collaboration, and a commitment to upholding the principles of equity and fairness.

References

- National Institute of Standards and Technology (NIST). “There’s More to AI Bias Than Biased Data, NIST Report Highlights.” Read more (2022).

- Massachusetts Institute of Technology (MIT) News. “Can machine-learning models overcome biased datasets?” Read more (2021).

- McKinsey & Company. “Tackling bias in artificial intelligence (and in humans).” Read more (2021).